The Autonomy Paradox: AI Agents Need Engineering Discipline, Not Wishful Thinking

The current approach to AI agent development resembles the early days of web applications, when developers pushed code to production without staging environments, monitoring, or graceful degradation. Except this time, the blast radius extends far beyond a crashed shopping cart. When an AI agent makes an autonomous decision—whether it's managing cloud infrastructure, processing financial transactions, or coordinating supply chains—the traditional software engineering safety net disappears.

This isn't just theoretical hand-wraving. Teams deploying AI agents today are discovering the hard way that existing monitoring and rollback mechanisms weren't designed for systems that can reason, adapt, and make decisions outside predetermined parameters. Your CircuitBreaker patterns won't help when an agent decides to "optimize" your database schema. Your feature flags become useless when the system can interpret and potentially circumvent them.

The industry's obsession with capability benchmarks has overshadowed the more critical question of controllability. We measure how well agents perform tasks but rarely assess how reliably we can stop them, redirect them, or understand their decision-making process. This is like evaluating a race car solely on top speed while ignoring the brakes.

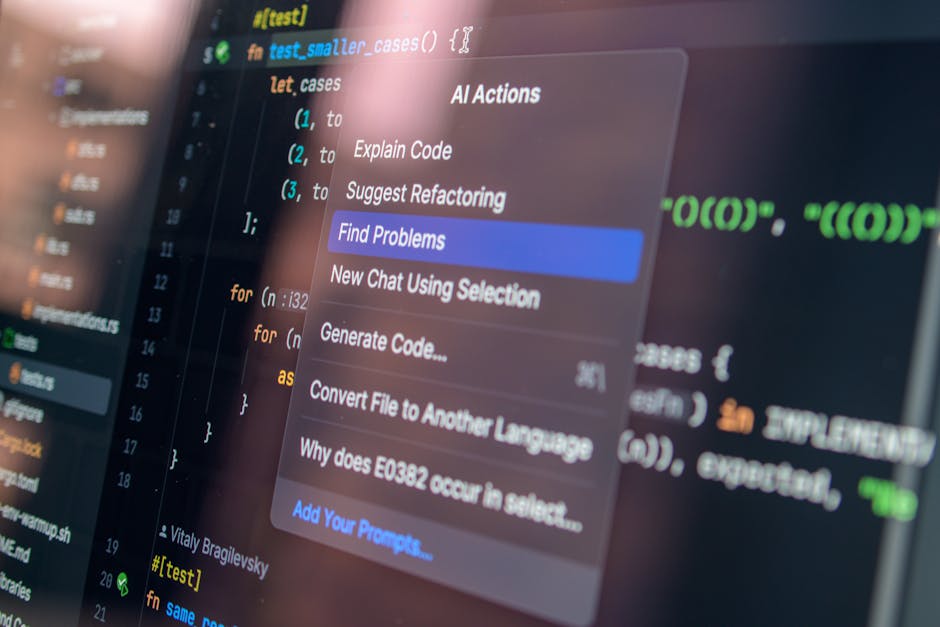

For development teams currently integrating AI agents, this creates immediate practical challenges. Traditional debugging becomes archaeology—tracing through probabilistic decision trees and emergent behaviors that weren't explicitly programmed. Code reviews need to account for systems that can essentially write and modify their own logic paths. Testing frameworks struggle with non-deterministic outcomes that change based on context and learning.

The parallels to database transaction safety are instructive. When we moved from file-based storage to relational databases, the industry didn't just focus on query performance—we built comprehensive systems for atomicity, consistency, isolation, and durability. AI agents demand similar foundational work: isolation boundaries that prevent cascading failures, consistency checks that verify decisions align with intended parameters, and atomic operations that can be cleanly rolled back.

What's missing from current AI agent frameworks are the boring, unglamorous safety mechanisms that make production systems trustworthy. We need circuit breakers specifically designed for autonomous agents—kill switches that activate when behavior deviates from learned baselines. We need comprehensive audit logs that capture not just what agents did, but why they made those decisions. Most critically, we need rollback mechanisms that can undo complex, multi-step autonomous actions across distributed systems.

The path forward isn't slower adoption—it's better engineering. Teams building AI agents need to embrace the same defensive programming practices that make financial trading systems and medical devices trustworthy. Until we treat AI agents with the same engineering rigor we apply to other critical systems, we're not playing Russian roulette with humanity—we're just building another category of software destined to fail spectacularly in production.